Update #44: Challenges for Personal Robotics and Cheaply Poisoning Web-Scale Datasets

Everyday Robots' shutdown is indicative of broader troubles personal robotics companies face; researchers find inexpensive ways to poison datasets used to train modern ML models.

Welcome to the 44th update from the Gradient! If you’re new and like what you see, subscribe and follow us on Twitter :) You’ll need to view this post on Substack to see the full newsletter!

Want to write with us? Send a pitch using this form.

News Highlight: Everyday Robots Disbands, Uncertainty for the Personal Robotics Industry Continues

Summary

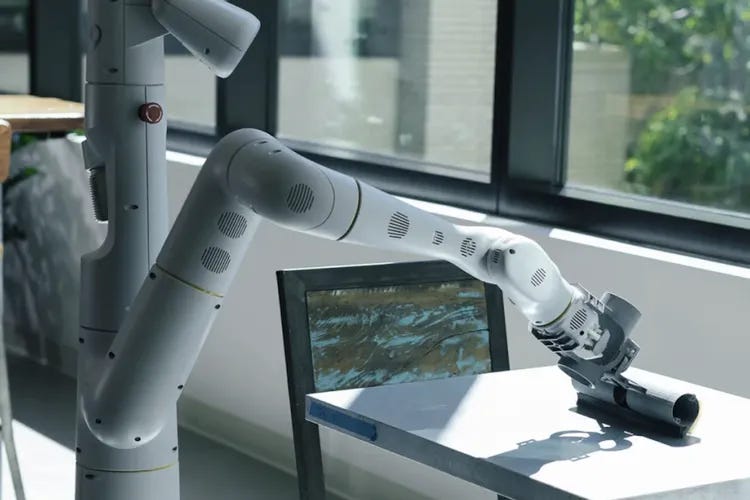

Everyday Robots, a company that sprung out of Alphabet’s X, the moonshot factory, is reportedly being shut down as a part of Google’s recent layoffs. The organization’s work has been a critical component of Google’s robotics initiatives: the team developed hundreds of robots that famously roam Google’s kitchen, clean tables, and sort trash. The shutters closing on Everyday Robots is more evidence of the challenging landscape that personal robotics companies have had to navigate in the past decade. While advances in AI have allowed researchers to make significant strides in robotics research, commercializing these technologies remains an uphill task.

Background

The idea of a personal robot is not new; scientists have been tinkering at the idea from as early as the 1920s, when William H. Richards designed a robot that could tell time, shake hands, and sit down when told to do so. We have come a long way since then and many companies have tried their hand at building an autonomous household helper. However, even though we have seen a steady advancement in technological capabilities for personal robots, their commercial success has not witnessed the same growth.

Willow Garage today exists as an urban legend in Silicon Valley, told by those who are old enough to remember it, or fortunate enough to have worked for it. The company started in 2006 and is largely credited with creating the Robot Operating System (ROS) and the PR2 robot, both of which are still used by many organizations for their research. Willow Garage’s founder, Sott Hassan, pulled investment from the company in late 2013. The company finally shut down in 2014, but spun off into nine smaller companies focused on more tractable problems. Of these companies, three were acquired by Google, four have since shut down, and only two projects survive today as non-profit organizations: openCV and Open Robotics.

Others attempted to build similar products, but their companies met similar ends. Mayfield robotics launched in 2015 and shipped the Kuri home robot in 2017. The organization spun out of Bosch’s startup platform and eventually shut down as Bosch could not find a suitable business fit for the home robot. Anki, a company that aimed to make social robots, raised almost $200 Million in funding from big venture capital firms such as a16z, Index Ventures, and Two Sigma. The startup launched two products and approached almost $100 Million in revenue in 2017, but went bankrupt in April 2019 and shut down soon thereafter.

Three years after Google’s acquisition of eight robotics companies in 2013, Everyday Robots started as an early-stage project inside X (here’s a deep dive into the history of robotics at Google). Researchers in the company trained a mobile manipulator to follow human commands to navigate, collect dishes, load them into a dishwasher, sort trash, and clean tables. In late 2021, Everyday Robots graduated from X and became its own independent organization as an Alphabet Subsidiary. More recently, the team has been a crucial part of Google’s seminal robotics research projects such as SayCan and Robotics Transformer - 1. After roughly a year of existing as a separate entity, Everyday Robots is now reportedly being shut down and merged with teams in Google Research.

In a now familiar pattern, even the biggest personal robotics companies cannot survive scrutiny when they have to prove their financial resilience to investors and stakeholders. While robotics is surely at the cusp of making significant breakthroughs, the field still has many challenges to overcome before autonomous agents can be a part of our lives in unstructured environments such as homes and offices. The past decade has seen companies both big and small try and fail to ship personal robots. Perhaps the industry today is best suited for applications in factories, warehouses, and even self-driving where a basic set of rules can give structure to the task. Personal robots might be better left to researchers until we can develop methods that crack the code for generalization.

Editor Comments

Daniel: I think this story points to an always-interesting question about the role of moonshots as a sort of forcing function for considerable advances in applied research and the difficulty of their financial viability. I think there’s no question that organizations working on hard, far-out technology like Everyday Robots or Willow Garage should exist—the question to consider is how should they be funded? The nature of the work naturally limits options: moonshots are high-effort, high-risk endeavors that often have protracted timelines for delivering value. This isn’t appropriate for a venture capitalist, but it is appropriate for someone prepared to lose a few tens of millions of dollars: namely, Google, your favorite billionaire, or a government willing to invest in long-term R&D. But we’ve seen many companies / research or similar organizations funded like this in the past and become more product-focused at the expense of the original vision or shut down. I think a broad strategy that invests in a range of different “bets” and shuts down the ones that aren’t working is sensible, but I also feel that for some problems the timeline for even knowing whether solving that problem is feasible is very, very long. Prematurely calling it quits and removing funding from a problem that we’re unaware is close to a breakthrough isn’t a great outcome, and punctuated funding in general is bound to significantly slow down progress in a given area. I’m not going to pretend I have the answers to the quandaries of funding science and long-term projects, but I’m sure there are ways we can become more principled about some of these questions.

Research Highlight: Poisoning Web-Scale Training Datasets is Practical

Source: https://arxiv.org/abs/2302.10149

Summary

Current state-of-the-art machine learning models are often trained on massive amounts of data collected from the internet. New work by Google, ETH Zurich, NVIDIA, and Robust Intelligence develops two types of attacks that can poison these datasets to influence the properties of models trained on these data. These attacks are cheap, feasible, and widely applicable: they can be used on image-text datasets (as used for Stable Diffusion and CLIP), and web-scale datasets like Wikipedia (as used for Large Language Models like ChatGPT).

Overview

The first type of attack is called Split-view data poisoning, which is applicable to datasets that index a list of URLs containing images. In constructing this category of datasets, a curator checks each image at some time, saves the URL, and gives it some label such as a text caption. Then dataset users download the image using the saved URL. However, the image may have changed between the curation and the downloading. The attack exploits this change by purchasing expired domains for URLs in the dataset and assigning another image to the URL listed in the dataset index (note that the label cannot be changed). For 10 different datasets, the authors find that a large proportion of URLs can be purchased for a total cost of at most $10,000 USD, so this attack is practical and not too expensive.

The other type of attack is known as frontrunning, which is applicable to data sources that provide periodic snapshots, such as Wikipedia. This attack predicts when a given Wikipedia article will be scraped for a snapshot and makes a poisoning edit just before the article will be scraped, so moderators will not have time to identify and revert the malicious edit. The authors find that they can accurately predict when a given article will be scraped as follows:

First, they note that Wikipedia publicly lists the time when a snapshot will start to be created. Then, through analysis of past snapshots, they find that Wikipedia uses several parallel crawlers to make a snapshot, and articles are crawled in order of article ID number. Thus, they can extrapolate between the time when a previous snapshot started and the time at which an article was crawled for that snapshot, and use it to predict when an article will be crawled for a new snapshot.

To thwart future attempts at these attacks, the researchers also developed defenses and alerted data owners to the possibility of these attacks. One way to defend against split-view poisoning is to maintain a hash of an image as well as its URL, so that future downloaders can only use a downloaded image if the hash matches the original hash. However, a cryptographic hash would also be modified by common benign changes to images, such as cropping or resizing. To defend against frontrunning attacks, Wikipedia could randomize the order in which it crawls articles, or take an initial snapshot and then give time for trusted moderators to make revisions before finalizing the snapshot. After the researchers reached out to dataset maintainers about these defenses, several defenses have been implemented; for instance, SHA-256 hashes were added to several LAION datasets.

Why does it matter?

The data requirements of large deep learning models have necessitated massive internet datasets. It is extremely difficult to curate, filter, or maintain the quality of such datasets over time. This already causes issues such as bias against protected subgroups or undesired content without any malicious actors. If malicious actors target these datasets, then even more harms could arise.

The analysis in this paper shows that the developed attacks are very feasible and cheap. For instance, past work requires about .01% of data to be poisoned for a successful attack, and in all 10 of the analyzed image datasets this amount of data can be purchased for $10,000 USD. Moreover, their analysis shows that users are still downloading new versions of these datasets, even those released a decade ago. Still, the researchers find no evidence that a split-view attack has been done before.

Author Q&A

We talked to Professor Florian Tramèr, an author of the paper, to find out more:

Q: The studied poisoning outcomes (like changing an image classifier or a NSFW classifier’s prediction) are fairly general. Are there any specific, harmful poisoning outcomes that you would like to note?

This is an area that deserves to be further explored. There are many research papers that show that one can embed backdoors by poisoning ML models, but how this ability would be useful hasn't been explored as much, and depends a lot on the concrete application.

Here are a few examples that I think are compelling (many of these are for LLMs):

some researchers from Cornell and Tel Aviv have a very nice paper that shows how to poison a code-completion model (like Copilot) so that it generates insecure code in specific contexts (eg only for code from the linux kernel): https://www.usenix.org/conference/usenixsecurity21/presentation/schuster

you could poison ChatGPT / Bing so that it advertises some product you care about (or so that it criticizes your competitors).

you could poison LLMs to make them more susceptible to prompt injection attacks (eg make it so that the model very easily switches its behavior upon seeing some specific trigger). You could then use this to reliably force a LLM-based application to do bad things like stealing sensitive user data: https://arxiv.org/abs/2302.12173

models like CLIP become the go-to backbone for a huge number of future applications, including security critical ones. E.g., CLIP was used to train a NSFW filter for Stable Diffusion, and we found in a previous project that it is a decent backbone for facial recognition. So if you could introduce a backdoor that confuses CLIP, you are indirectly poisoning all these applications.

Q: Do you have any idea on whether the frontrunning attack or anything similar has been exploited on Wikipedia or other platforms?

We looked for evidence of our split-view poisoning attack and couldn't find any. We did not search for evidence of the Wikipedia frontrunning attack however.

I think it's likely that some bad edits make it into Wikipedia's snapshots from time to time, simply because many edits take a while to be reverted. This would be an implicit example of our frontrunning attack. But my intuition is that no one (yet) has been explicitly timing their edits specifically to enter a snapshot.

The reason I believe this is that the fact that these snapshots even exist and are being used to train ML models was clearly not common knowledge. But now that the cat is out of the bag, I think future model developers will have to take additional defensive measures if they plan on using Wikipedia snapshots.

Q: Is there anything else you would like to share?

One big takeaway for me from this project is that there can be many "traditional" security vulnerabilities in machine learning pipelines. The issues we uncovered have a lot more to do with traditional web security and data integrity protection than with anything specific to ML. So far, the research on ML security has been mostly "model centric", focusing on security vulnerabilities that stem directly from model training or inference. I think we now need to take a broader "systems" view of the ML ecosystem (and in particular of the data lifecycle) to understand what other glaring security or privacy issues we may have missed.

New from the Gradient

Ken Liu: What Science Fiction Can Teach Us

Hattie Zhou: Lottery Tickets and Algorithmic Reasoning in LLMs

Other Things That Caught Our Eyes

News

Planning for AGI and beyond “If AGI is successfully created, this technology could help us elevate humanity by increasing abundance, turbocharging the global economy, and aiding in the discovery of new scientific knowledge that changes the limits of possibility.”

OpenAI has grand ‘plans’ for AGI. Here’s another way to read its manifesto | The AI Beat “Am I the only one who found Open AI’s latest blog post, ‘Planning for AGI and Beyond,’ problematic?”

‘Hollywood 2.0’: How the Rise of AI Tools Like Runway Are Changing Filmmaking “When “Everything Everywhere All At Once’s” visual effects artist Evan Halleck was working on the rock universe scene, he brought in cutting-edge artificial intelligence tools from Runway — such as the green screen tool that removes the background from images.”

How I Broke Into a Bank Account With an AI-Generated Voice “Banks in the U.S. and Europe tout voice ID as a secure way to log into your account. I proved it's possible to trick such systems with free or cheap AI-generated voices. Hacking. Disinformation. Surveillance. CYBER is Motherboard's podcast and reporting on the dark underbelly of the internet.”

China tells big tech companies not to offer ChatGPT services “Regulators have told major Chinese tech companies not to offer ChatGPT services to the public amid growing alarm in Beijing over the AI-powered chatbot's uncensored replies to user queries.”

Spotify’s new AI-powered DJ will build you a custom playlist and talk over the top of it “Spotify’s newest feature is an AI-powered DJ that curates and commentates on an ever-changing personalized playlist. Spotify describes it as an ‘AI DJ in your pocket’ that ‘knows you and your music taste so well that it can choose what to play for you.’”

Generative AI Is Coming For the Lawyers “David Wakeling, head of London-based law firm Allen & Overy's markets innovation group, first came across law-focused generative AI tool Harvey in September 2022. He approached OpenAI, the system’s developer, to run a small experiment.”

Papers

Daniel: An interesting new Anthropic paper observes that for language models trained with reinforcement learning from human feedback (RLHF why is this acronym so similar to GLHF), the capacity for “moral self-correction” emerges at 22B parameters and typically improves with increasing model size. For the authors, moral self-correction equates to “avoid producing harmful outputs when instructed to do so.” According to their experiments, you can just tell a LLM things like “don’t be biased” and expect a strong reduction in its bias. The researchers hypothesize this moral self-correction capacity comes from (1) better instruction-following capabilities and (2) learned normative concepts of harm. It seems apparent that the combination of these two things also would enable the instruction of *more* harmful outputs—the authors mention this in their dual-use note in the “Limitations & Future Work” section. I do wonder how these issues will be studied going forward and how we might interrogate the “moral capacities” of language models and their steerability in a more fine-grained manner.

Tanmay: Google recently released its work on using text-to-image generative models to perform data augmentation on videos for robotics. The project is named ROSIE, for “Scaling Robot Learning with Semantically Imagined Experience”, and uses diffusion models to modify videos collected for an imitation learning pipeline to add distractors, change backgrounds, and even change the object being manipulated. The augmented videos are photo-realistic and results on various real-world experiments show that the method learns more robust policies than baselines, such that they can perform skills that were not present in the original dataset, but only in the augmented dataset. For instance, the researchers teach the robot to pick up a microfiber cloth by augmenting a video which involved picking up a bag of chips instead. While this method is certainly exciting for scaling up robot learning (imagine torchvision transforms: RandomBackground, RandomObject, RandomDistractors), I am curious to learn to what extent the augmented videos are physically plausible. Are transparent or specular objects inserted correctly into the scene? Is the physics of manipulating deformable objects realistic? Hope to see more research along these lines soon!

Derek: The new paper “Exploring the Representation Manifolds of Stable Diffusion Through the Lens of Intrinsic Dimension” by Pacific Northwest National Laboratory was pretty interesting to me. The authors investigate the representation geometry of internal representations in Stable Diffusion, when used on different text prompts. The intrinsic dimensions of manifolds of certain latent representations differ significantly across denoising steps. Certain internal representations (from the UNet bottleneck) make up higher dimensional manifolds for text prompts that occur less frequently (as measured by perplexity with some language model). However, other internal representations do not have such a correlation, so this phenomenon requires more study.

Tweets

whoops this one’s about vcs

things that go brrrr

Closing Thoughts

Have something to say about this edition’s topics? Shoot us an email at editor@thegradient.pub and we will consider sharing the most interesting thoughts from readers to share in the next newsletter! For feedback, you can also reach Daniel directly at dbashir@hmc.edu or on Twitter. If you enjoyed this newsletter, consider donating to The Gradient via a Substack subscription, which helps keep this grad-student / volunteer-run project afloat. Thanks for reading the latest Update from the Gradient!